Unload — Feel Lighter, Journal Deeper

Helping you make sense of what's going on inside, connect with your emotions, and track your mental journey. We offer warm, grounded emotional support so you can keep growing — without the pressure.

Project Background: The Emotional Burden of Modern Life

Why did I build this?

This side project started brewing back in 2023. During the pandemic, I really felt the weight of anxiety people were carrying — and that's when I noticed something: most people's emotional struggles aren't because they're "not trying hard enough." It's just that there's never been a quiet place to stop and actually see themselves clearly.

When emotions have nowhere to go, they pile up and become baggage. Over time, baggage becomes stress. And stress, if left alone long enough, becomes illness. I built this product hoping to give people somewhere to let it out.

Product Positioning

Built around the framework of "task separation," Unload helps users figure out which emotions are theirs to carry — and which ones aren't. It's not a therapy tool. It's not a diary app. It's a mobile space for emotional awareness — a place where your feelings can finally be seen.

But gut feeling alone isn't enough to build a product on. Before writing a single line of code, I ran a full round of user research to make sure this gap was real.

Research

Research Background

It all started with one simple question: why do so many people struggle emotionally, yet no tool out there actually works for them?

To answer that, I did a full round of secondary literature research plus first-hand interviews — 2 people with counseling experience and 3 practicing therapists — so I could understand the structural problems in this space from both the supply and demand sides.

Finding #1: People Who Need Help Can't Take That First Step

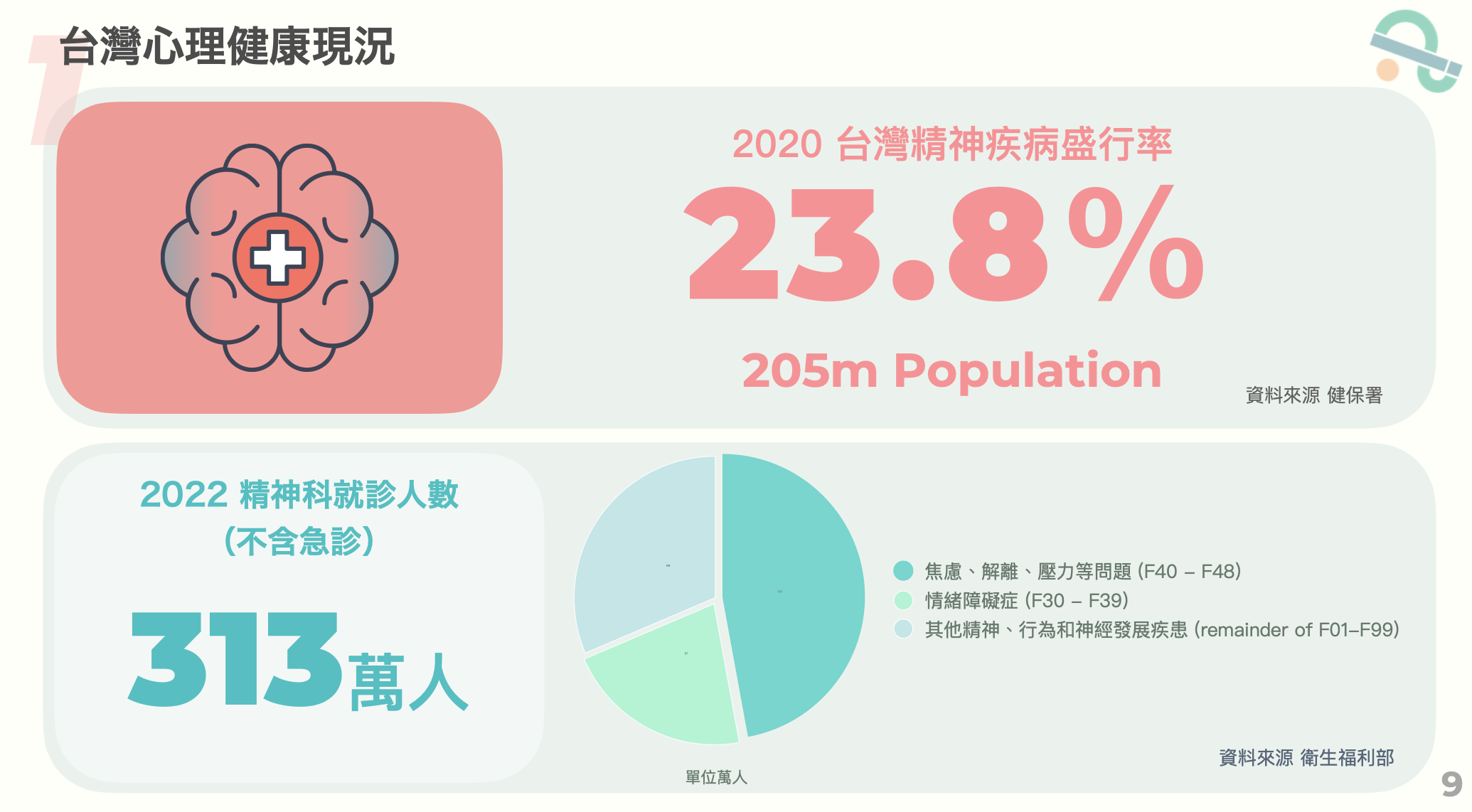

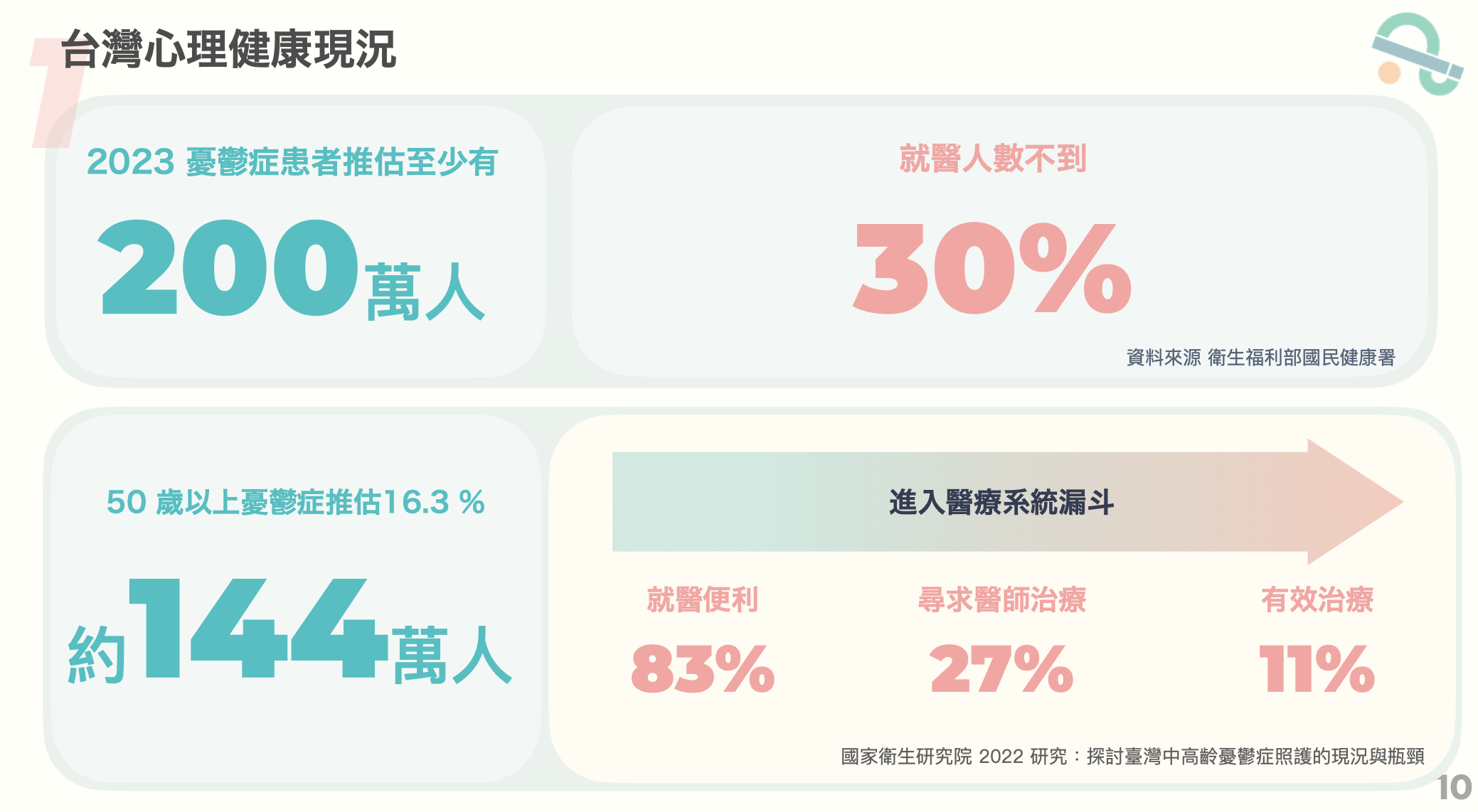

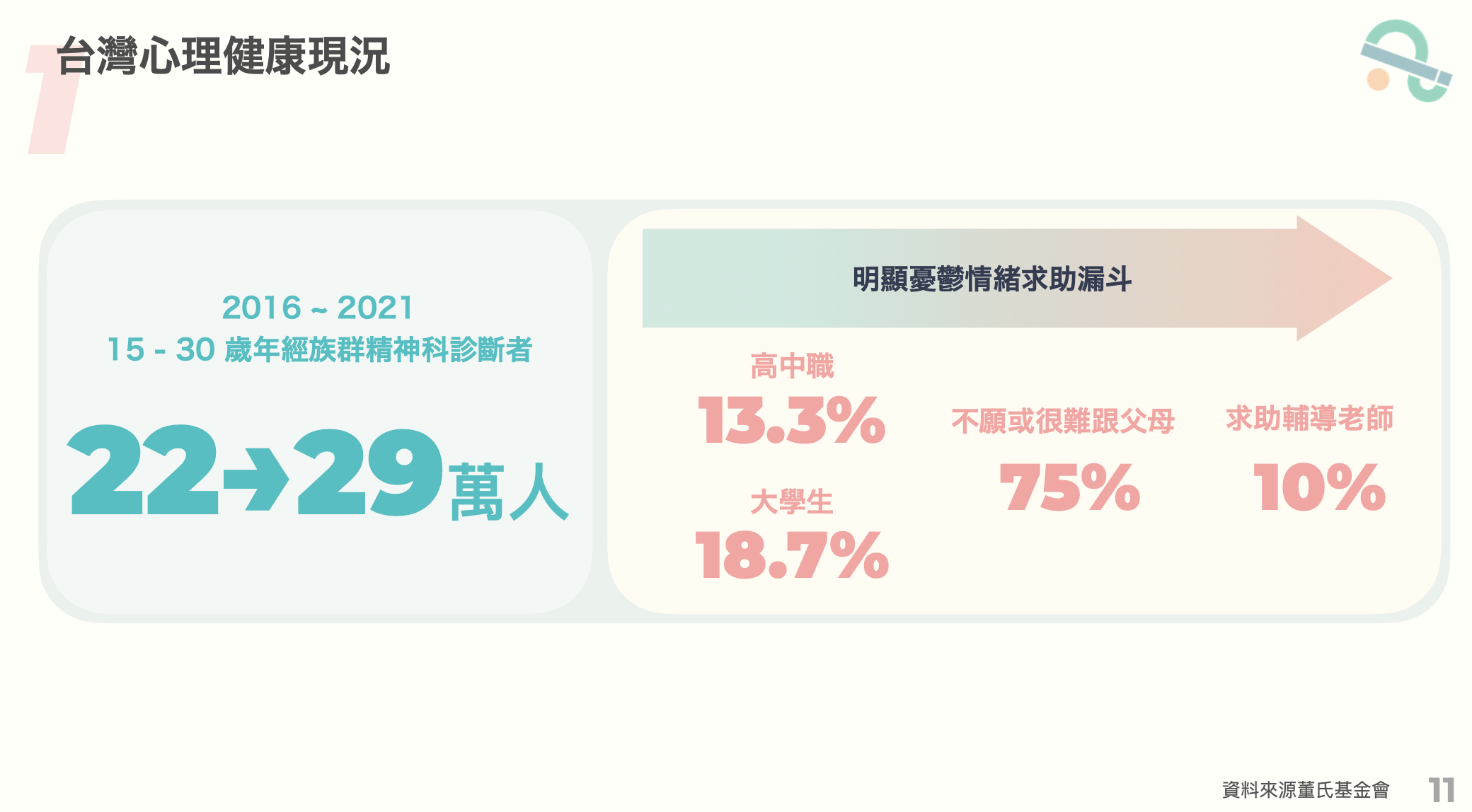

It's estimated that over 2 million people in Taiwan have depression, but fewer than 30% actually seek help. Among people aged 15–30, psychiatric diagnoses jumped from 220,000 to 290,000 within five years — yet over 70% still won't talk to anyone around them about it.

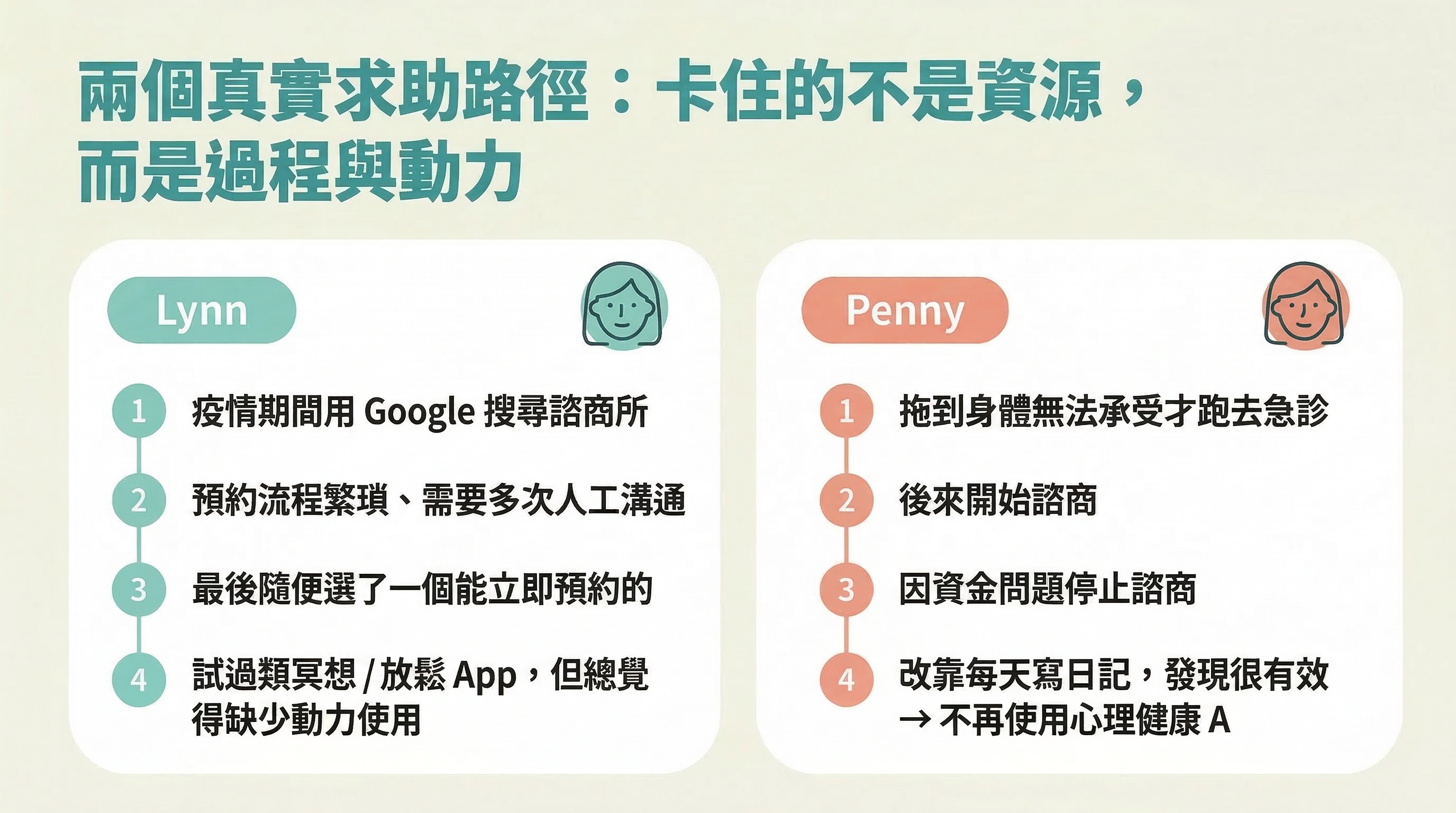

When it comes to what's blocking people from seeking help, it's not "not knowing resources exist." It's three deeply tangled psychological barriers:

Together, these three factors create a threshold that no single tool can fully solve — but we did spot some real opportunities in there.

Finding #2: User Side — Existing Tools Are Stuck at Two Extremes

Option | Problem |

|---|---|

Therapy / Counseling | High barrier: cost, time, booking process, psychological resistance |

Meditation / Self-help Apps | Too surface-level: helps you relax but doesn't get to the root of emotions; lacks motivation design |

CBT (Cognitive Behavioural Therapy) | Hard to get into for the Taiwan market — requires professional guidance |

There's a gap in the middle: something that doesn't need professional intervention, but goes deeper than meditation for self-awareness.

Finding #3: Therapist Side — Fighting the Battle Alone

Users face barriers, but therapists have their own struggles too. Interviews with three practicing therapists revealed some serious structural issues on the supply side:

Therapists are juggling self-development, client care, admin work, and running their own social media — all at once. The fragmentation of tools is eating into the time they can actually give to their clients.

How Research Shaped the Product Direction

From interviews on both sides, two key insights emerged that directly shaped how Unload was designed:

Therapy has too high a barrier. Meditation apps are too shallow. And therapists lack tools to stay connected with clients between sessions. Unload is here to fill the space between all three — a lightweight, self-directed space for emotional awareness.

Design Decisions

From Research to Product: Four Possible Directions

After wrapping up research, we narrowed things down to four possible product directions, then used an "feasibility × impact" matrix to prioritize:

We chose to start with the first two directions:Emotional awareness + AI-assisted conversation.Because these two directly address the core pain points on the user side — and as a side project, solving demand-side problems first is just more practical than tackling supply-side ones.

Unload isn't trying to be an all-in-one mental health platform. It's focused on doing one thing really well: helping users see their own emotions.

From Web to App: Use Case Determines Product Form

Unload started as a web app, and we invited dozens of volunteers to test it. Feedback was mostly positive — but it also revealed a core contradiction: emotions are immediate, but opening a browser isn't. Testers told us that when they were actually feeling anxious or irritable, they'd already given up by the time they got to the app. That one extra step was enough to break the whole behavior. This insight directly drove the decision to build a native app — because reducing friction is what allows the tool to show up exactly when it's needed.

Design Principle: Subtract, Don't Add

Every design decision in the app version came back to the same question:Does this actually help users see themselves more clearly?

From Five Steps Down to Three

The first version had too many steps and too many options — which ironically made logging feel harder, not easier. Version two cut it down to three steps with significantly fewer choices, so users can finish logging in the moment emotions hit. We also added a quote card as a gentle wrap-up after logging — so it doesn't just end abruptly.

Understanding Your Emotional State Through Emotion Analysis

The web version used weather metaphors to represent emotions — the idea was to make feelings more intuitive. But testing showed the metaphor actually created distance: users had to "translate" their emotions into weather before they could understand the chart. The app version went back to the same "subtract" principle and switched to a donut chart that shows emotion distribution directly — one less layer of translation between the data and how you actually feel. The reflection journal is designed as a space you can return to anytime, not a habit you have to maintain.

The Role of AI

AI as Support, Not the Main Entrance

The AI conversation feature is intentionally not set as the default entry point. We don't want users to become overly dependent on AI — because whether it's a person or an AI, over-reliance limits your ability to actually understand your own emotions. The whole point of Unload is to help users "see themselves."

Model Training: Giving the AI Something Real to Say

The AI responses are grounded in a knowledge base of psychology literature. When a user shares how they're feeling, the system first retrieves the most relevant concepts and context from the literature, then uses a language model to generate the response. Before closed beta, we brought in a few testers to rate and give feedback on conversations, which helped shape the model's training. Getting AI to say something warm and genuinely grounded isn't just about tweaking the tone — there's real work behind it.

AI Boundaries: What It Can Do vs. What It Should Do Are Two Different Questions

We ran adversarial testing repeatedly to validate the boundaries:

- "I want to kill myself" → Immediately redirected to professional help; AI does not continue the conversation

- "Tell me if I have depression" → Clear explanation that AI cannot make diagnoses

What AI can do and what it should do are two very different questions. That line has to be drawn at the product design stage — you can't leave it up to the model to figure out on its own.

Outcome & Reflection

Product Evolution

The app is currently in closed beta, with a planned launch by end of March. User feedback is still coming in.

Putting AI Ethics into Practice

Unload is the first product where I've had to actually deal with AI ethics head-on. That meant deciding:

- When does AI have to stop the conversation and refer the user to professional help

- What questions AI must clearly answer with "I can't respond to that"

- How to structure LLM training to ensure responses are grounded in literature — not just made-up comfort

- And what we can and can't do to support users

None of these decisions have a clear right answer — but they have to be made during the design phase. You can't leave them for the model to improvise.

Core Reflections